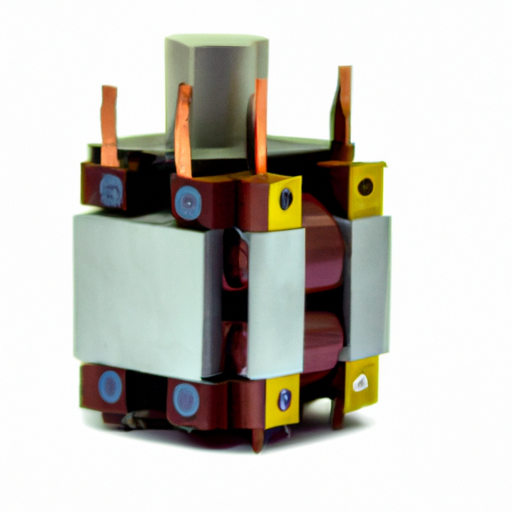

The ECS-F1HE335K Transformers, like other transformer models, exemplify the transformative impact of the transformer architecture across various fields, particularly in natural language processing (NLP) and beyond. Below, we delve deeper into the core functional technologies and notable application development cases that underscore the effectiveness of transformers.

Core Functional Technologies of Transformers

| 1. Self-Attention Mechanism | |

| 2. Positional Encoding | |

| 3. Multi-Head Attention | |

| 4. Feed-Forward Neural Networks | |

| 5. Layer Normalization and Residual Connections | |

| 6. Scalability | |

| 1. Natural Language Processing (NLP) | |

| 2. Machine Translation | |

| 3. Question Answering Systems | |

| 4. Image Processing | |

| 5. Speech Recognition | |

| 6. Healthcare Applications | |

| 7. Code Generation and Understanding |

Application Development Cases

Conclusion

The ECS-F1HE335K Transformers and their foundational technologies have demonstrated remarkable effectiveness across a multitude of domains. Their capacity to understand context, scale efficiently, and adapt to various tasks positions them as a cornerstone of contemporary AI applications. As research and development in transformer technology continue to advance, we can anticipate even more innovative applications and enhancements that will further expand their impact across industries.